The Quiet Loneliness of the Digital World

For decades, video games have been a medium of profound but one-sided communication. We, the players, would pour our intentions into plastic controllers, and the world would react with pre-programmed precision. We spoke to the world through our actions, but when we encountered its inhabitants—the Non-Player Characters (NPCs) who populate our favorite digital landscapes—we were met with a script. A beautiful, often poignant script, but a rigid one nonetheless.

There is a specific kind of loneliness in a dialogue tree. No matter how much we might want to ask a shopkeeper about their day or debate a wizard on the ethics of fireballs, we were limited to the options the writers had anticipated. We were observers of a story, even if we were the ones driving it. But today, a quiet revolution is taking place. The silence is breaking. Video game characters are finally starting to listen—and more importantly, they are starting to talk back in ways we never thought possible.

The Breaking of the Fourth Wall: From Scripted to Spontaneous

The transition from static dialogue to dynamic conversation is not merely a technical upgrade; it is a fundamental shift in the philosophy of play. At the heart of this transformation lie two pillars of modern technology: advanced voice recognition and sophisticated text-to-speech (TTS). When these technologies converge with Large Language Models (LLMs), the boundary between the player and the game world begins to dissolve.

In the past, voice recognition in gaming was a novelty—a gimmick that often required perfect pronunciation and a very specific set of commands. Today, the focus has shifted toward natural language understanding. A character doesn’t just wait for a keyword; they interpret intent. This allows for a level of spontaneity that mimics human interaction. When a character responds to your actual voice, rather than a button press, the immersion moves from the screen into the room with you.

The Technology of Listening

For a game character to truly listen, the processing must be near-instantaneous. This is where the industry is seeing a significant shift toward local speech processing. As we have explored in our discussions on secure environments, the latency of sending a player’s voice to a cloud server and back can shatter the illusion of a conversation. By embedding voice recognition directly into the game engine or the local hardware, developers are creating characters that react in real-time, catching the nuances of a player’s hesitation or excitement.

The Art of the Response

Listening is only half of the equation. The “talking back” requires a voice that feels alive. Gone are the days of robotic, monotone delivery. Modern text-to-speech technology now carries emotional weight, capable of adjusting tone, pitch, and cadence based on the context of the conversation. If a player is aggressive, the NPC might respond with a tremor of fear or a defensive snap. This emotional resonance is what transforms a tool into a companion.

More Than Code: The Emotional Weight of Conversation

Why does this matter? Why do we care if a digital blacksmith can comment on the specific armor we’re wearing in a voice that sounds tired from a day at the forge? The answer lies in our innate human desire for connection. When a game recognizes our unique input, it validates our presence within that world. It tells us that we aren’t just a ghost passing through a pre-rendered cemetery; we are a participant in a living history.

This evolution offers several profound benefits to the gaming experience:

- Unprecedented Immersion: Removing the UI of a dialogue box allows players to stay focused on the world and the character in front of them.

- Emergent Storytelling: No two players will have the exact same conversation, leading to a personal narrative that feels uniquely theirs.

- Enhanced Accessibility: For players with motor impairments that make traditional controllers difficult to use, voice-driven interaction opens new doors to complex gaming experiences.

- Dynamic World-Building: NPCs can react to events in the game world that the developers couldn’t possibly have scripted for every individual scenario.

The Ethical Echo: Privacy and the Human Connection

As we reflect on this shift, we must also consider the responsibilities it brings. A world that listens is a world that records. As voice interfaces become standard in everything from automotive systems to home consoles, the sanctity of a player’s private space becomes a paramount concern. This is why the push for embedded, local voice recognition is not just a technical preference but an ethical one. Ensuring that a player’s voice stays within their device is essential to maintaining the trust required for deep immersion.

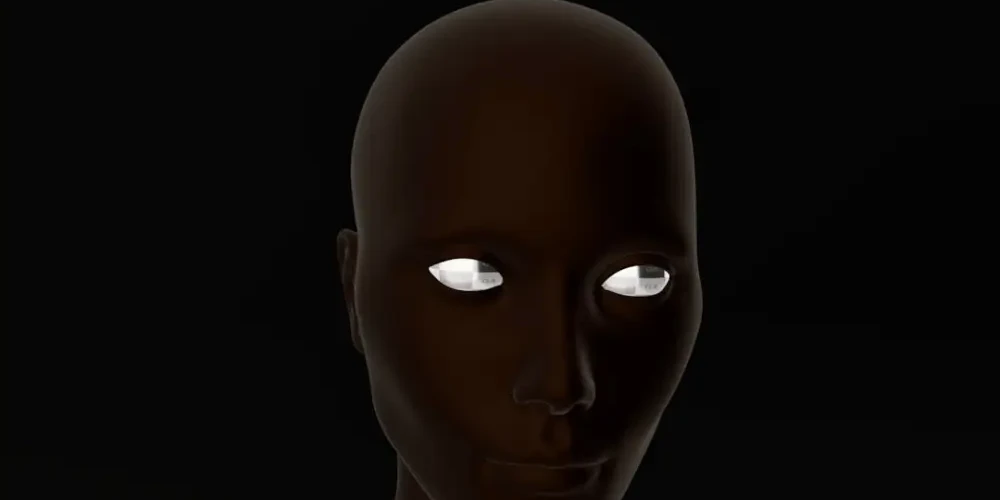

Furthermore, there is the question of the “uncanny valley.” As characters become more human-like in their speech and comprehension, the moments where they fail—where the logic breaks or the voice glitches—can feel more jarring than they did in the era of text boxes. We are treading a fine line between creating a companion and creating a mirror that occasionally cracks.

Conclusion: The Beginning of a New Dialogue

We are standing at the threshold of a new era in digital entertainment. The transition from “Press E to talk” to simply speaking is a journey toward a more empathetic and reactive form of media. It challenges developers to think not just as writers, but as architects of personality. It challenges players to engage with their games not just with their thumbs, but with their minds and voices.

As we look forward, the goal isn’t just to make games that are more realistic, but to make them more resonant. When a video game character finally looks you in the eye and responds to your words with a thought of their own, the world feels a little less like a simulation and a little more like a home. The silence is over, and the conversation is just beginning.

Related Posts

How digital humans are finally finding a voice that feels truly real

Discover how digital humans are…